Ask NASA Climate

Vanishing Corals: NASA Data Helps Track Coral Reefs

In Brief: Coral reefs, one of the most important ecosystems in the world, are in a global decline due to climate change. Data from airborne and satellite missions can fill in the gaps in underwater surveys and help create a…

Aerosols: Small Particles with Big Climate Effects

In Brief: Aerosols are small particles in the air that can either cool or warm the climate, depending on the type and color of the particle. We often think of aerosols as spray paint, insect repellant, or similar substances sprayed…

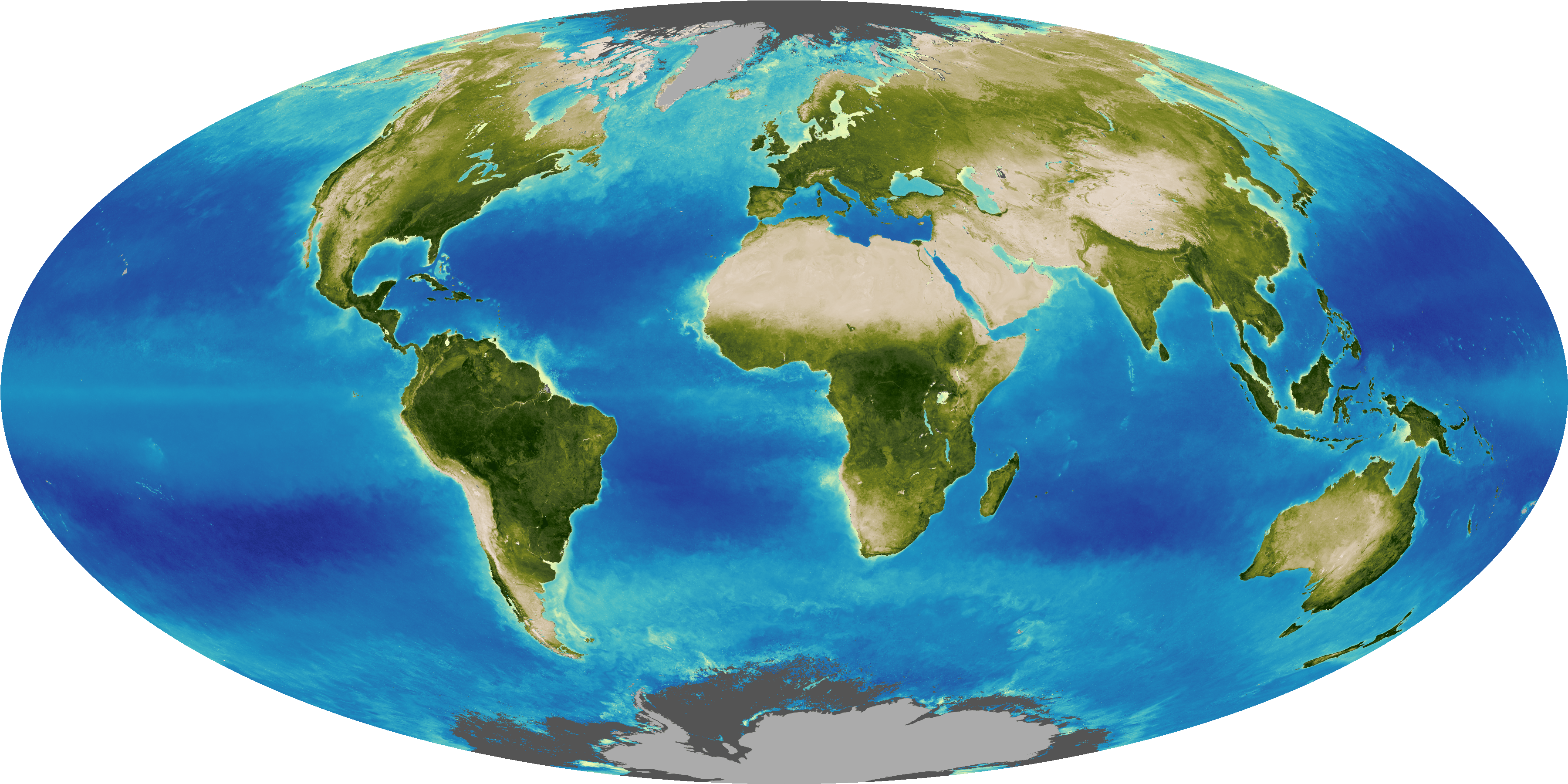

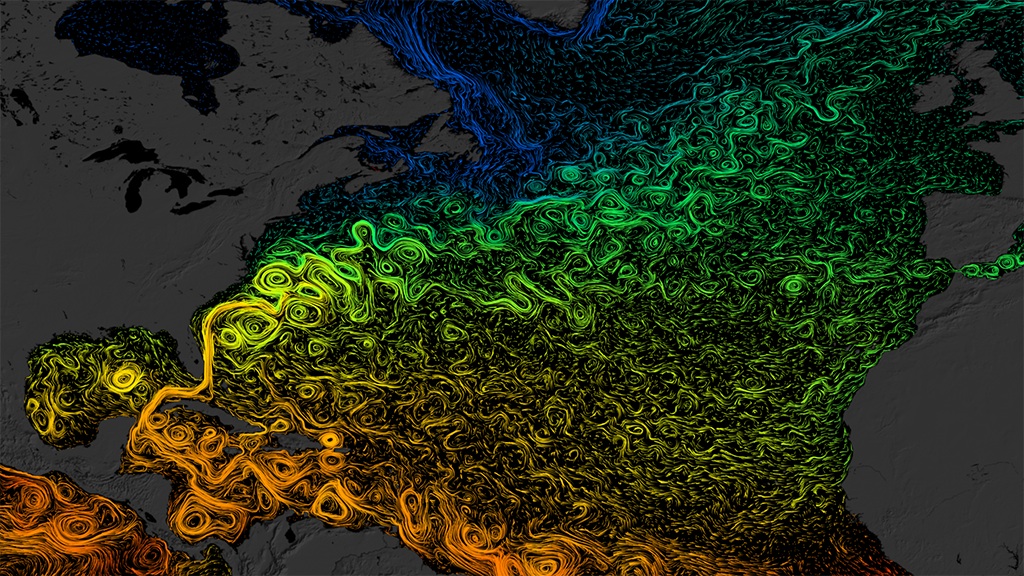

Slowdown of the Motion of the Ocean

In Brief: As the ocean warms and land ice melts, ocean circulation — the movement of heat around the planet by currents — could be impacted. Research with NASA satellites and other data is currently underway to learn more. Dynamic…

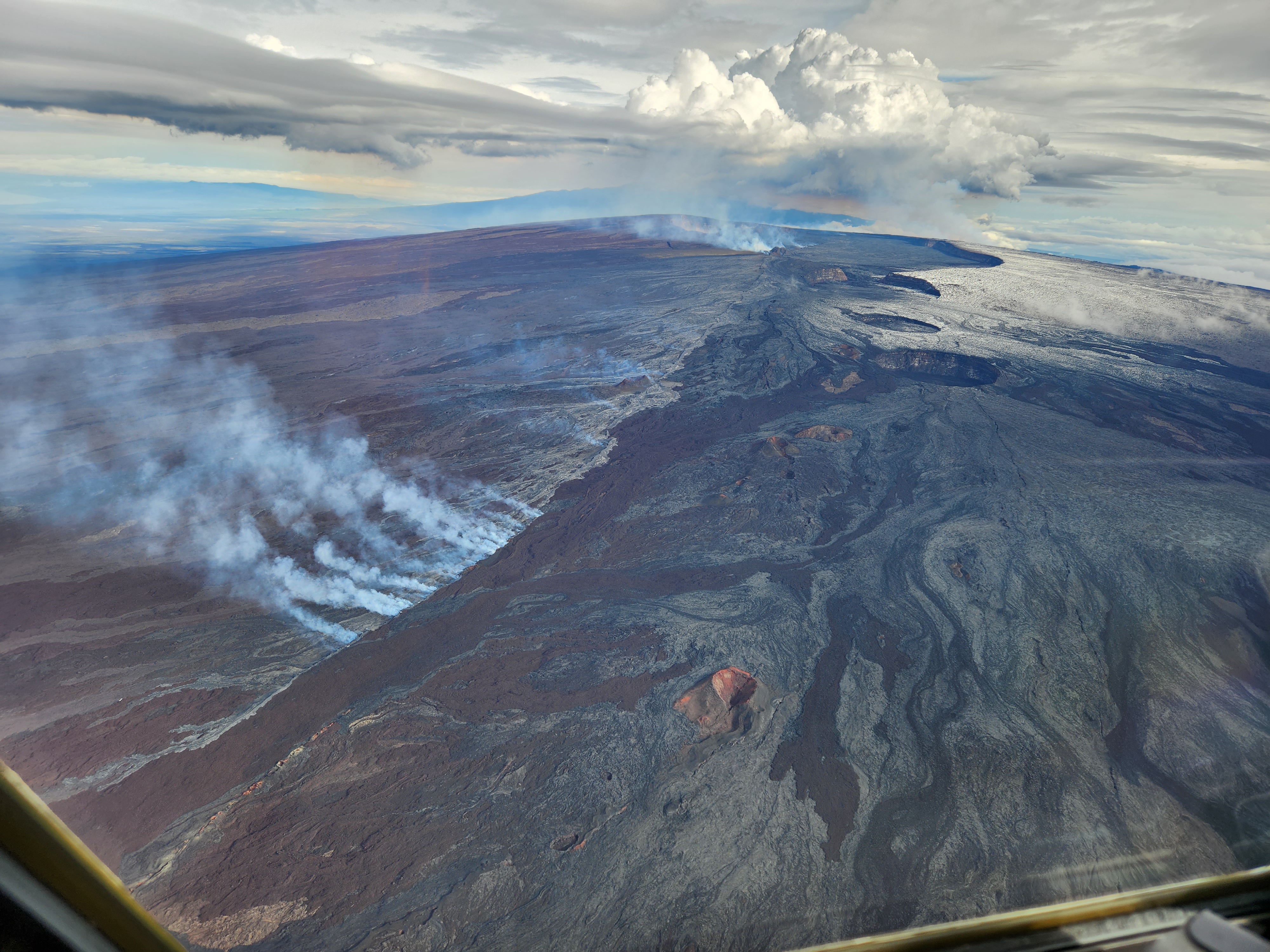

How Do We Know Mauna Loa Carbon Dioxide Measurements Don’t Include Volcanic Gases?

In Brief: The amount of CO2 in the atmosphere is measured at Mauna Loa Observatory, Hawaii, and all around the world. NASA also measures CO2 from space. Data from around the planet all shows the same upward trend. The longest…

Too Hot to Handle: How Climate Change May Make Some Places Too Hot to Live

In Brief: As Earth’s climate warms, incidences of extreme heat and humidity are rising, with significant consequences for human health. Climate scientists are tracking a key measure of heat stress that can warn us of harmful conditions. How hot is…

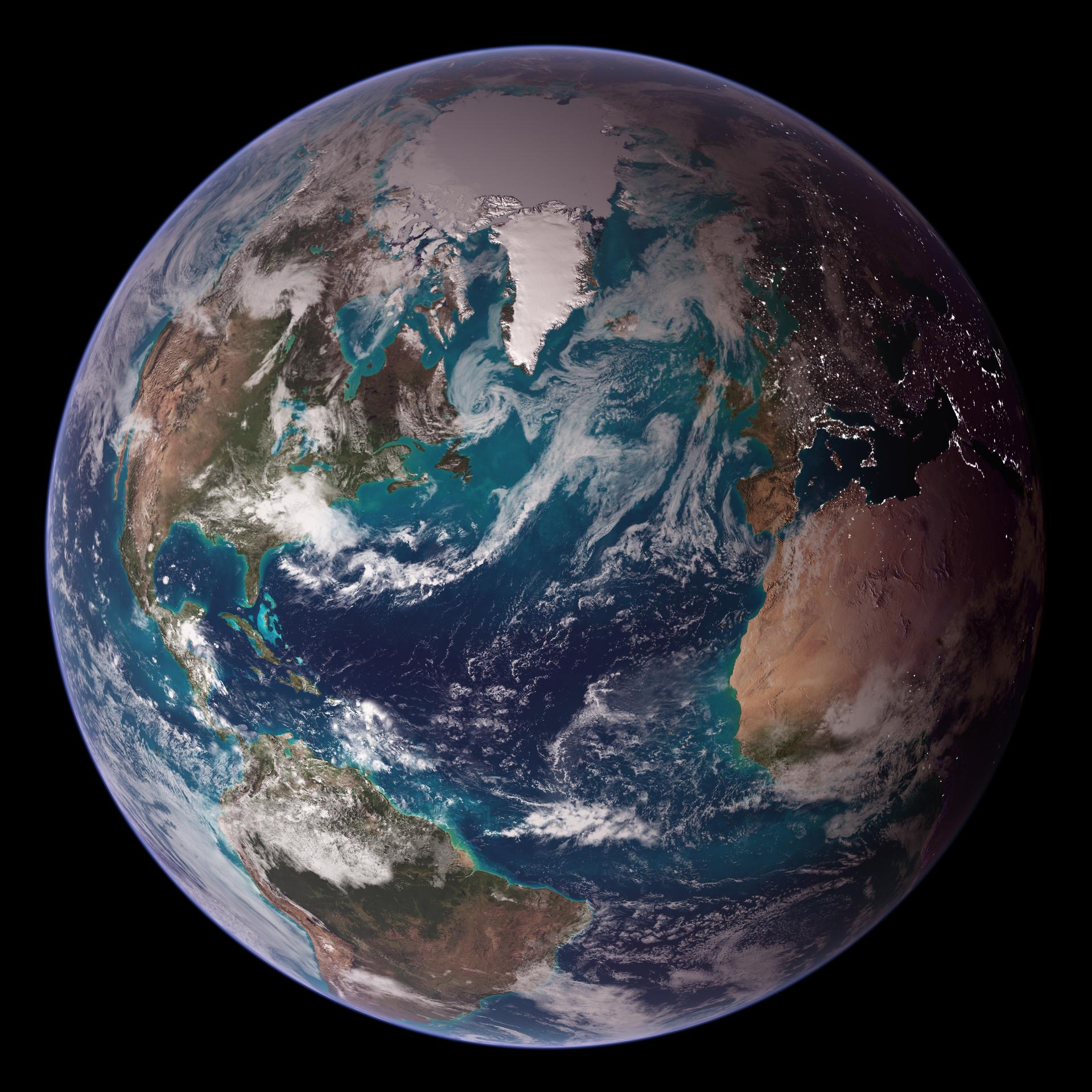

Steamy Relationships: How Atmospheric Water Vapor Amplifies Earth’s Greenhouse Effect

Water vapor is Earth’s most abundant greenhouse gas. It’s responsible for about half of Earth’s greenhouse effect — the process that occurs when gases in Earth’s atmosphere trap the Sun’s heat. Greenhouse gases keep our planet livable. Without them, Earth’s…

Extreme Makeover: Human Activities Are Making Some Extreme Events More Frequent or Intense

In Brief: It’s not your imagination: Certain extreme events, like heat waves, are happening more often and becoming more intense. But what role are humans playing in Earth’s extreme weather and climate event makeover? Scientists are finding clear human fingerprints.…